Snowflake Snapshots / Backups

How and why to use Snapshots/Backups in Snowflake

I’m sure I am not the only one: Every now and then someone approaches me with a question like “I pulled some data from this report some weeks/months ago and when I open the same report now, the numbers changed - what happened?”

Sometimes it’s possible to reconstruct the applied filters and exactly reproduce the numbers, but if too much time passed… well, who remembers what they filtered in a report last week, let alone 6 months ago?

This situation can be quite annoying: it’s difficult to reproduce outputs of a data model when the raw data refreshed several times in between and there might even have happened some adjustment in the data model.

The solution: create a copy of modeled data just to have it available for such situations.

Sure, you can include that in your transformation pipeline (e.g. using dbt), but in case you’re lucky enough to host your models in Snowflake, there now (announced in June 2025 and as of this writing in open preview) is a new kid on the block:

Backups for disaster recovery and immutable storage

Backups represent discrete snapshots of Snowflake objects. You choose which objects to back up, how frequently to back them up, how long to keep the backups, and whether to add a retention lock so that they can’t be deleted prematurely.

Create snapshots

I happen to arrange my prepared data models in schemas. Hence, taking a snapshot of a whole schema containing all materialized tables (and view definitions if there are any) is my preferred method to backup those models. Of course you could just as well create backups of individual schema objects (tables) or whole catalogs (databases).

First, we’ll create a snapshot policy defining how often snapshots are taken. Depending on the frequency, the maximum retention period differs (during the preview period anyway). For daily snapshots (I don’t refresh my models more often?) you can set up to 1 year retention:

create or replace backup policy consume.fundraising.bp_data_model_daily

schedule = '1440 MINUTE'

expire_after_days = 366

comment = 'Daily backups of the fundraising data model'

;In theory you could use CRON here, too, but then the retention might have an issue with identifying its maximum value. Or, alternatively, not set a schedule at all and just use a task to create a new snapshot right after the model is refreshed (cf. below).

Next, define the stuff to be included in the snapshots:

create or replace backup set consume.fundraising.bs_data_model_daily

for schema consume.fundraising

with snapshot policy consume.fundraising.bp_data_model_daily

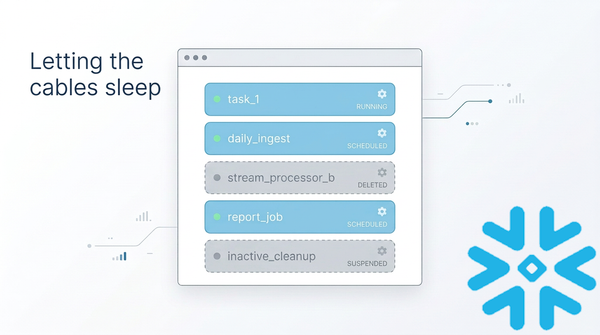

;create or alter task consume.fundraising.ta_refresh_fundraising_snapshot

user_task_managed_initial_warehouse_size = 'XSMALL'

serverless_task_max_statement_size = 'XSMALL'

user_task_timeout_ms = 600000 -- 10 minutes

target_completion_interval = '10 MINUTES'

allow_overlapping_execution = false

comment = 'Take snapshot of fundraising data model'

after consume.fundraising.ta_refresh_fundraising_model

as

alter backup set consume.fundraising.bs_data_model_daily add backup

;And that’s really it. Now every day a snapshot of the model is stored (for a year) and whenever someone want’s to look back in time, you can simply restore a snapshot.

Restore snapshots

First, identify all snapshots currently available:

show backups in backup set consume.fundraising.bs_data_model_daily;This will provide a table of snapshots and their IDs. Pick the ID of whichever snapshot you want to restore and create a new schema from the restored snapshot:

create schema consume.fundraising_<suffix_for_the_restored_snapshot>

from snapshot set consume.fundraising.bs_data_model_daily

identifier '<snapshot_id>'

;If you now point your report to this schema, it will display the data just as it was materialized back when the snapshot was created.

Clean up snapshots

You probably don’t need to keep all (daily) snapshots for the whole retention period (though storage costs are presumably not too significant anyway). I personally like to keep a monthly snapshot for a long period and daily snapshots for just a few weeks. This can easily be done by using two parallel backup policies and sets:

create or replace backup policy consume.fundraising.bp_data_model_daily

-- schedule = '1440 MINUTE' -- triggered by task

expire_after_days = 90

comment = 'Daily backups of the fundraising data model'

;

create or replace backup policy consume.fundraising.bp_data_model_monthly

-- schedule = '1440 MINUTE' -- triggered by task

expire_after_days = 3653

comment = 'Monthly backups of the fundraising data model'

;

show backup policies in consume.fundraising;

create or replace backup set consume.fundraising.bs_data_model_daily

for schema consume.fundraising

with backup policy consume.fundraising.bp_data_model_daily

;

create or replace backup set consume.fundraising.bs_data_model_monthly

for schema consume.fundraising

with backup policy consume.fundraising.bp_data_model_monthly

;

show backup sets in consume.fundraising;And then, in my chain of tasks refreshing the schema, I'll add a downstream task to populate both sets:

create or alter task consume.fundraising.ta_refresh_fundraising_snapshot

user_task_managed_initial_warehouse_size = 'XSMALL'

serverless_task_max_statement_size = 'XSMALL'

user_task_timeout_ms = 600000 -- 10 minutes

target_completion_interval = '10 MINUTES'

allow_overlapping_execution = false

comment = 'Take snapshot of fundraising data model'

after consume.fundraising.ta_refresh_fundraising_model

as

declare

backup_exists boolean default false;

res resultset;

begin

-- always take the daily backup

alter backup set consume.fundraising.bs_data_model_daily add backup;

-- monthly backup only if none exists for the current month

res := (show backups in backup set consume.fundraising.bs_data_model_monthly);

let c cursor for res;

for row_var in c do

if (date_trunc('month', row_var."created_on") = date_trunc('month', current_date())) then

backup_exists := true;

end if;

end for;

if (not backup_exists) then

alter backup set consume.fundraising.bs_data_model_monthly add backup;

end if;

end;

;

alter task consume.fundraising.ta_refresh_fundraising_snapshot resume;Notes

This method works really well if there are no dependencies outside the snapshot schema. Once again, efficiency depends on discipline: If you stick to a strict layer-architecture during modeling, it’ll be beneficial here.

This snapshot feature created less fuzz than many other features Snowflake released this year. However fancy all the other features are, I believe it’s nevertheless worth noting how such less-fancy improvements can have a significant impact on convenience and efficiency in the daily life of a data engineer/analyst 🥹