Exporting Snowflake data to Excel

Generate XLSX files directly from Snowflake using a small Snowpark stored procedure, writing straight to a stage so stakeholders get ready-to-use Excel workbooks without any CSV exports or manual conversions

TL/DR: Snowflake's COPY INTO <location> doesn't support XLSX, so I wrote a generic stored procedure that does.

Why COPY INTO doesn't help

A stakeholder of mine (not a Snowflake user) recently asked if I could hand them their data as .xlsx instead of .csv, so they wouldn't have to convert it in Excel every time. Fair enough. And I thought: why not?

The natural first stop when exporting tabular data out of Snowflake is COPY INTO <location>. Point it at a stage, give it a query, done. Except: it only supports CSV, JSON, and Parquet. No XLSX. No way to convince it otherwise.

So either I keep producing CSV and ask the stakeholder to keep clicking through Excel's import wizard (which... no), or I produce XLSX somewhere else. Snowpark in a stored procedure it is.

The plan

The shape of the solution:

- Run the query inside the procedure with Snowpark

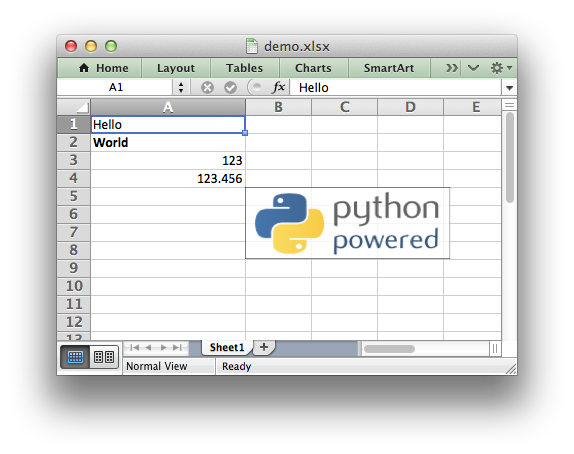

- Build an XLSX in memory with

xlsxwriter - Push the resulting bytes to a stage

Three design choices worth calling out:

Why xlsxwriter, not openpyxl or Pandas? xlsxwriter is write-only, generally faster, and uses less memory than openpyxl. Pandas would require loading everything into a DataFrame first, which is wasteful when I can just iterate over Snowpark rows directly.

Why put_stream, not PUT via SQL? PUT via SQL is blocked inside stored procedures. session.file.put_stream on the other hand supports external stages and accepts a BytesIO buffer directly, which is exactly what xlsxwriter writes into.

Why auto_compress=False? XLSX is already a ZIP-based format. Double-compressing adds overhead with zero benefit.

The procedure

Here is the full thing:

create or alter procedure meta.public.p_export_xlsx(

query string

, stage_path string

, filename string

)

returns string

language python

runtime_version = '3.12'

packages = ('snowflake-snowpark-python', 'xlsxwriter')

handler = 'main'

comment = 'Export query result as .xlsx to a stage'

execute as caller

as

$$

import io

import xlsxwriter

def main(session, query, stage_path, filename):

df = session.sql(query)

schema = df.schema

col_names = [f.name for f in schema.fields]

rows = df.collect()

buffer = io.BytesIO()

wb = xlsxwriter.Workbook(buffer, {'in_memory': True})

ws = wb.add_worksheet('Data')

for col_idx, name in enumerate(col_names):

ws.write(0, col_idx, name)

for row_idx, row in enumerate(rows, start=1):

for col_idx, name in enumerate(col_names):

val = row[name]

if val is None:

ws.write_blank(row_idx, col_idx, None)

elif isinstance(val, (int, float)):

ws.write_number(row_idx, col_idx, val)

elif isinstance(val, bool):

ws.write_boolean(row_idx, col_idx, val)

else:

ws.write_string(row_idx, col_idx, str(val))

wb.close()

buffer.seek(0)

stage_location = f'{stage_path}/{filename}'

session.file.put_stream(

buffer

, stage_location

, auto_compress=False

, overwrite=True

)

return f'Exported {len(rows)} rows to {stage_location}'

$$

;The interface is intentionally minimal: a SQL query string, a stage path, and a target filename. That's it.

execute as caller matters here: the procedure runs with the caller's privileges, so it can read whatever tables or views the caller already has access to and write to whatever stages they can access. No special service-role gymnastics needed.Calling it

Once it is in place, exporting any query is a one-liner:

call meta.public.p_export_xlsx(

'select * from consume.data.view_to_export'

, '@consume.data.named_external_stage/folder'

, 'file.xlsx'

);From there, the file lives on the stage and can be pulled by anything with access to it: a Snowflake task, a downstream tool like Power Automate, or a SharePoint sync.

Caveats

A few honest things to note (i.m.h.o. all manageable, but worth knowing):

- The current implementation calls

df.collect(), so the entire result set lives in memory inside the procedure. For tens of thousands of rows that's perfectly fine. For millions, it isn't, and a streaming variant would be needed...xlsxwritersupportsconstant_memorymode for exactly this. Then again: When was the last time someone tried to open a multi-million-row worksheet in Excel? 🤔 - Type handling is intentionally simple: numbers, booleans, blanks, and "everything else as string". Dates currently fall into the string bucket, which is good enough for my use case but trivial to extend with

write_datetimeif Excel-native date formatting matters.

And that's it: the stakeholder gets their .xlsx without converting anything outside of Snowflake 😎